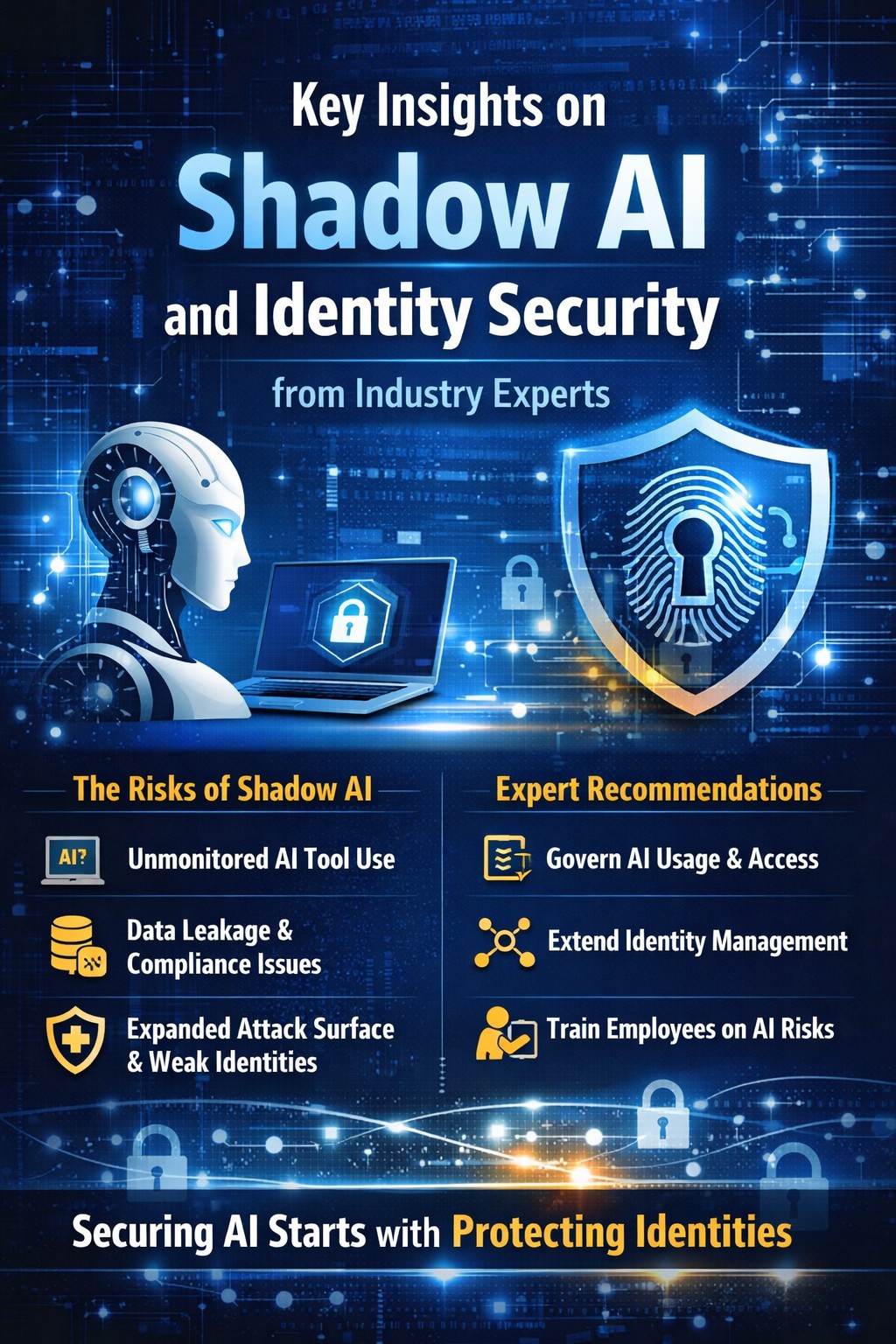

Key Insights on Shadow AI and Identity Security from Industry Experts

🔍 Why Shadow AI Is a Growing Concern

Shadow AI refers to the unsanctioned or unmonitored use of AI tools—often generative AI—by employees or business units without formal IT/cybersecurity oversight. It’s similar to the old “shadow IT” problem, but riskier because the tools interact with sensitive data and AI workflows can embed into business decisions without visibility.

Industry experts agree this trend is accelerating because AI has become ubiquitous across SaaS applications and standalone services. Employees use tools like ChatGPT, Claude, or embedded AI features in workplace apps for quick decisions, analysis, or automation—often bypassing security teams entirely.

🛡️ Identity Security Risks Amplified by Shadow AI

1. Expanding Attack Surfaces

Unauthorized AI tools often connect to sensitive systems without proper authentication or visibility. Each AI instance effectively creates a new identity—one that may have unseen access to enterprise data if not governed properly.

This increases the attack surface dramatically, as unmanaged identities (including AI agents themselves) can:

Handle sensitive data off-platform

Circumvent traditional security and identity management systems

Open vectors for lateral movement or credential theft

2. Frequent Data Exposure and Compliance Gaps

Security telemetry shows that data policy violations involving AI are rising sharply. In one report, the typical organization recorded 223 incidents per month of employees sending sensitive data to external AI tools—often via personal or unmanaged accounts.

This includes regulated data (financial, health, intellectual property), which can expose enterprises to:

Data breaches

Industry compliance violations

Audit and legal risks

3. Identity Weaknesses Enable Breaches

Security experts have found that identity controls are at the heart of most breaches, and this trend is worsening in a world where AI agents act like machine identities. A recent incident response report found that 90% of breaches involved weak identity controls, with AI agents and automated systems expanding the number of identities that could be targeted or exploited.

4. AI Agents Need “Background Checks”

Cisco leadership emphasized that as AI agents take on more autonomous tasks, they must be treated like employees—with background checks, verification, and trust validation—to ensure they don’t act unpredictably or introduce security gaps.

This reflects a broader view among experts: identity management must expand to include machine, bot, and agent identities, not just human users.

⚖️ Expert Guidance: Balancing Innovation with Security

Governance Is Crucial

Experts stress that simply banning shadow AI isn’t practical. Instead:

Document all sanctioned AI tools and enforce usage policies

Monitor AI tool usage with visibility tools that can detect unauthorized access

Classify and enforce identity risk-based controls

Extend identity and access management (IAM) to include AI agents and machine identities

These measures help prevent AI tools from becoming backdoors into corporate systems.

Employee Awareness and Policy Training

Risk reports show many users lack training on AI security, and nearly 60% had never been educated about data risk connected to AI use. Expert recommendations include structured training about which tools are approved and how to handle data responsibly.

Cross-Functional Collaboration

Industry thought leaders encourage security, IT, compliance, legal, and business units to collaborate on AI governance. This shared approach helps:

Define acceptable AI use cases

Clarify identity and data boundaries

Ensure rapid policy updates as tools evolve

📊 What This Means for Identity Security

Shadow AI changes the identity security model in several key ways:

✔ AI adds machine-level identities that often bypass traditional human-centric IAM frameworks.

✔ Identity governance must include AI agents and not just employees/services.

✔ Security controls must track who/what is accessing data, not just where it’s stored.

✔ Zero trust and continuous verification are essential to mitigate unauthorized AI access.

✔ AI governance frameworks must evolve to include model usage, data flow, and identity robustness.

🧠 Final Takeaway

Industry experts are clear: Shadow AI isn’t just a compliance annoyance—it fundamentally alters who is identity-verified, what can access sensitive systems, and how trust boundaries are enforced in the enterprise. Without clear governance, visibility, and identity controls, organizations risk data leakage, unauthorized access, regulatory penalties, and reputational damage.

The future of secure AI adoption hinges on treating AI-related identities with the same (or greater) scrutiny as human identities and implementing governance that scales with innovation—not behind it.

Read More: https://technologyaiinsights.c....om/takeaway-from-obs